Part 2: AgentCore Gateway & Memory

Introduction

In Part 1, I established the foundation with Terraform infrastructure and Cognito authentication. In this article, I add the intelligence layer: AgentCore Gateway for serverless tools and AgentCore Memory for persistent context.

This article covers:

- Creating Lambda-based tools accessible via MCP protocol

- Deploying the AgentCore Gateway

- Setting up AgentCore Memory with multiple strategies

- Integrating memory hooks for context-aware conversations

AgentCore Gateway: Serverless Tools via MCP

The Gateway transforms AWS Lambda functions into MCP (Model Context Protocol) tools that agents can discover and invoke. This enables clean separation between agent logic and tool implementation.

Why Gateway?

- Scalability: Lambda auto-scales with demand

- Flexibility: Update tools without redeploying the agent

- Security: Tools run in isolated Lambda environments with specific IAM permissions

- Protocol Abstraction: Agent doesn’t need to know Lambda-specific invocation details

The Gateway Tools

This implementation includes two Lambda tools:

- check_warranty_status: Looks up product warranty information

- web_search: Searches the web using DuckDuckGo

Implementing Lambda Tools

Lambda Handler Structure

All tools share a common handler in gateway/lambda_function.py:

from check_warranty import check_warranty_status

from web_search import web_search

def get_named_parameter(event, name):

"""Extract named parameter from event."""

if name not in event:

return None

return event.get(name)

def lambda_handler(event, context):

"""Lambda handler for AgentCore Gateway tools."""

# Extract tool name from context

extended_tool_name = context.client_context.custom["bedrockAgentCoreToolName"]

resource = extended_tool_name.split("___")[1]

if resource == "check_warranty_status":

serial_number = get_named_parameter(event=event, name="serial_number")

customer_email = get_named_parameter(event=event, name="customer_email")

if not serial_number:

return {"statusCode": 400, "body": "Please provide serial_number"}

try:

warranty_status = check_warranty_status(

serial_number=serial_number,

customer_email=customer_email

)

return {"statusCode": 200, "body": warranty_status}

except Exception as e:

return {"statusCode": 400, "body": f"Error: {e}"}

elif resource == "web_search":

keywords = get_named_parameter(event=event, name="keywords")

region = get_named_parameter(event=event, name="region") or "us-en"

max_results = get_named_parameter(event=event, name="max_results") or 5

if not keywords:

return {"statusCode": 400, "body": "Error: Please provide keywords"}

try:

search_results = web_search(

keywords=keywords,

region=region,

max_results=int(max_results)

)

return {"statusCode": 200, "body": search_results}

except Exception as e:

return {"statusCode": 400, "body": f"Error: {e}"}

return {"statusCode": 400, "body": f"Error: Unknown tool: {resource}"}

Key Points:

- Single Lambda handles multiple tools via routing

- Tool name extracted from AgentCore context

- Parameter validation before execution

- Consistent error handling

Example Tool: Warranty Check

Here’s the warranty check implementation (gateway/check_warranty.py):

def check_warranty_status(serial_number: str, customer_email: str = None) -> str:

"""

Check product warranty status.

Args:

serial_number: Product serial number

customer_email: Customer email (optional)

Returns:

Warranty status information

"""

# Mock warranty database

warranties = {

"SN12345": {

"product": "Laptop Model X",

"purchase_date": "2023-06-15",

"warranty_period": "3 years",

"expires": "2026-06-15",

"status": "Active",

"coverage": "Full hardware and software support"

},

# ... more entries

}

warranty = warranties.get(serial_number)

if not warranty:

return f"No warranty found for serial number: {serial_number}"

return f"""Warranty Status for {serial_number}:

Product: {warranty['product']}

Purchase Date: {warranty['purchase_date']}

Warranty Period: {warranty['warranty_period']}

Expires: {warranty['expires']}

Status: {warranty['status']}

Coverage: {warranty['coverage']}

"""

In production, this would query an actual warranty database. The mock implementation demonstrates the interface.

API Specification

Tools are defined in gateway/api_spec.json using JSON schema:

{

"openapi": "3.0.0",

"info": {

"title": "AgentCore Gateway Tools",

"version": "1.0.0"

},

"paths": {

"/check_warranty_status": {

"post": {

"operationId": "check_warranty_status",

"description": "Check product warranty status using serial number",

"requestBody": {

"content": {

"application/json": {

"schema": {

"type": "object",

"properties": {

"serial_number": {

"type": "string",

"description": "Product serial number"

},

"customer_email": {

"type": "string",

"description": "Customer email (optional)"

}

}

}

}

}

}

}

}

}

}

This specification enables the agent to understand:

- What the tool does

- What parameters it expects

- What data types are required

Deploying the Gateway

The gateway deployment module handles the creation and configuration:

def create_gateway(gateway_name: str, api_spec: List) -> dict:

"""Create an Amazon Bedrock AgentCore gateway with Lambda tools."""

# Sanitize gateway name

gateway_name_sanitized = sanitize_agent_name(gateway_name)

# Get Lambda ARN and Cognito config from SSM

lambda_arn = get_ssm_parameter(f"{APP_PARAMETER_NAME}/lambda_arn")

web_client_id = get_ssm_parameter(f"{APP_PARAMETER_NAME}/user_pool_client_id")

m2m_client_id = get_ssm_parameter(f"{APP_PARAMETER_NAME}/cognito_m2m_client_id")

discovery_url = get_ssm_parameter(f"{APP_PARAMETER_NAME}/cognito_discovery_url")

# Create gateway with JWT authorization

response = gateway_client.create_gateway(

name=gateway_name_sanitized,

authorizerConfiguration={

"customJWTAuthorizer": {

"allowedClients": [m2m_client_id],

"discoveryUrl": discovery_url

}

}

)

gateway_id = response['id']

gateway_url = response['gatewayUrl']

# Wait for gateway to become READY

wait_for_gateway_available(gateway_id)

# Create gateway target (connects to Lambda)

target_response = gateway_client.create_gateway_target(

gatewayIdentifier=gateway_id,

name=f"{gateway_name_sanitized}-target",

apiSpec=api_spec,

awsLambda={

"lambdaArn": lambda_arn,

"payloadFormat": "BEDROCK_AGENTCORE",

}

)

# Save configuration to SSM

put_ssm_parameter(f"{APP_PARAMETER_NAME}/gateway_id", gateway_id)

put_ssm_parameter(f"{APP_PARAMETER_NAME}/gateway_url", gateway_url)

return {

'id': gateway_id,

'name': gateway_name_sanitized,

'gateway_url': gateway_url,

'gateway_arn': response['gatewayArn']

}

Gateway Creation Flow:

- Retrieve configuration from SSM Parameter Store

- Create gateway with Custom JWT authorization

- Wait for gateway status to become READY

- Create target linking gateway to Lambda function

- Store gateway details in SSM for runtime access

JWT Authorization:

- Accepts tokens from both web client (users) and M2M client (services)

- Validates tokens using Cognito discovery URL

- Ensures only authorized requests can invoke tools

Deploying the Gateway

# Load API specification

api_spec = load_api_spec("gateway/api_spec.json")

# Create gateway

gateway_info = create_gateway(

gateway_name=AGENT_GATEWAY_NAME,

api_spec=api_spec

)

print(f"Gateway URL: {gateway_info['gateway_url']}")

print(f"Gateway ID: {gateway_info['id']}")

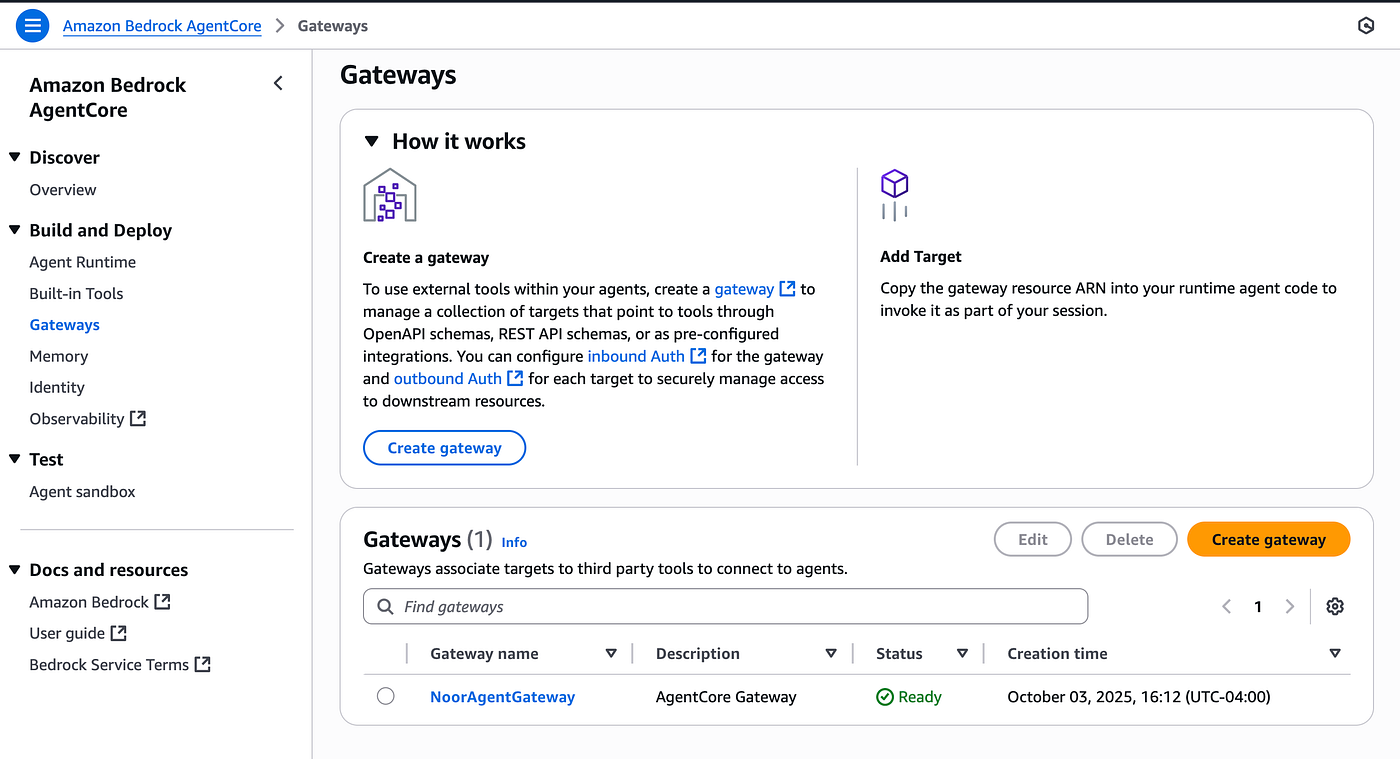

After deployment, the gateway appears in the AWS Console with a “Ready” status:

The AgentCore Gateway successfully created and ready to expose Lambda tools as MCP-compatible endpoints. The gateway provides secure access to Lambda functions through OAuth 2.0 JWT authentication.

AgentCore Memory: Persistent Context

Memory enables agents to remember past conversations and build personalized experiences. AgentCore Memory provides managed infrastructure with customizable strategies.

Memory Strategies

The implementation uses three complementary strategies:

1. Semantic Strategy

Stores business facts and client interactions:

- Company policies referenced

- Client account details discussed

- Important business context

2. Summary Strategy

Maintains conversation summaries:

- High-level overview of topics discussed

- Key decisions made

- Action items identified

3. User Preferences Strategy

Captures client preferences and patterns:

- Preferred communication style

- Frequently asked questions

- Client-specific preferences

Memory Hooks Architecture

Memory integration uses hooks to intercept agent execution at key points (agent/memory.py):

from strands.hooks import (

HookProvider,

MessageAddedEvent,

AfterInvocationEvent,

)

class AgentMemoryHooks(HookProvider):

"""Memory hooks for business agent"""

def __init__(self, memory_id: str, client: MemoryClient,

actor_id: str, session_id: str):

self.memory_id = memory_id

self.client = client

self.actor_id = actor_id

self.session_id = session_id

self.namespaces = {

i["type"]: i["namespaces"][0]

for i in self.client.get_memory_strategies(self.memory_id)

}

def retrieve_client_context(self, event: MessageAddedEvent):

"""Retrieve client context before processing query"""

messages = event.agent.messages

if messages[-1]["role"] == "user":

user_query = messages[-1]["content"][0]["text"]

all_context = []

# Search all memory namespaces

for context_type, namespace in self.namespaces.items():

memories = self.client.retrieve_memories(

memory_id=self.memory_id,

namespace=namespace.format(actorId=self.actor_id),

query=user_query,

top_k=3,

)

for memory in memories:

if isinstance(memory, dict):

content = memory.get("content", {})

text = content.get("text", "").strip()

if text:

all_context.append(f"[{context_type.upper()}] {text}")

# Inject context into the query

if all_context:

context_text = "\n".join(all_context)

original_text = messages[-1]["content"][0]["text"]

messages[-1]["content"][0]["text"] = \

f"Client Context:\n{context_text}\n\n{original_text}"

def save_business_interaction(self, event: AfterInvocationEvent):

"""Save business interaction after agent response"""

messages = event.agent.messages

if len(messages) >= 2 and messages[-1]["role"] == "assistant":

# Extract query and response

client_query = None

agent_response = None

for msg in reversed(messages):

if msg["role"] == "assistant" and not agent_response:

agent_response = msg["content"][0]["text"]

elif msg["role"] == "user" and not client_query:

client_query = msg["content"][0]["text"]

break

if client_query and agent_response:

# Save to memory

self.client.create_event(

memory_id=self.memory_id,

actor_id=self.actor_id,

session_id=self.session_id,

messages=[

(client_query, "USER"),

(agent_response, "ASSISTANT"),

],

)

def register_hooks(self, registry: HookRegistry) -> None:

"""Register memory hooks"""

registry.add_callback(MessageAddedEvent, self.retrieve_client_context)

registry.add_callback(AfterInvocationEvent, self.save_business_interaction)

Hook Lifecycle:

- MessageAddedEvent: Fires when user sends a message

- Retrieves relevant memories from all strategies

- Injects context into the user’s query

- AfterInvocationEvent: Fires after agent responds

- Extracts the conversation pair (user query + agent response)

- Saves to memory for future retrieval

Creating the Memory Resource

The memory creation module handles the setup:

from bedrock_agentcore.memory import MemoryClient

from bedrock_agentcore.memory.constants import StrategyType

def create_memory(name: str, ssm_param: str):

"""Create AgentCore Memory with multiple strategies."""

memory_client = MemoryClient(region_name=REGION)

# Define memory strategies

strategies = [

{

StrategyType.USER_PREFERENCE.value: {

"name": "ClientPreferences",

"description": "Captures client preferences and behavior patterns",

"namespaces": ["client/{actorId}/preferences"],

}

},

{

StrategyType.SEMANTIC.value: {

"name": "BusinessSemantic",

"description": "Stores business facts and client interactions",

"namespaces": ["client/{actorId}/semantic"],

}

},

{

StrategyType.SUMMARY.value: {

"name": "ConversationSummary",

"description": "Captures conversation summaries and context",

"namespaces": ["client/{actorId}/summary"],

}

},

]

# Create memory resource

response = memory_client.create_memory_and_wait(

name=name,

description="Business agent memory",

strategies=strategies,

event_expiry_days=90,

)

memory_id = response["id"]

# Store memory ID in SSM

put_ssm_parameter(ssm_param, memory_id)

put_ssm_parameter(f"{APP_PARAMETER_NAME}/memory_name", name)

return memory_id

Memory Configuration:

- Each strategy has its own namespace pattern using

{actorId}placeholder - Memories expire after 90 days (configurable)

- Memory ID stored in SSM for runtime access

Deploying Memory

from agentcore_memory import create_memory

from config import APP_PARAMETER_NAME, MEMORY_NAME

memory_id_ssm_param = f"{APP_PARAMETER_NAME}/memory_id"

memory_id = create_memory(

name=MEMORY_NAME,

ssm_param=memory_id_ssm_param

)

print(f"Memory created: {memory_id}")

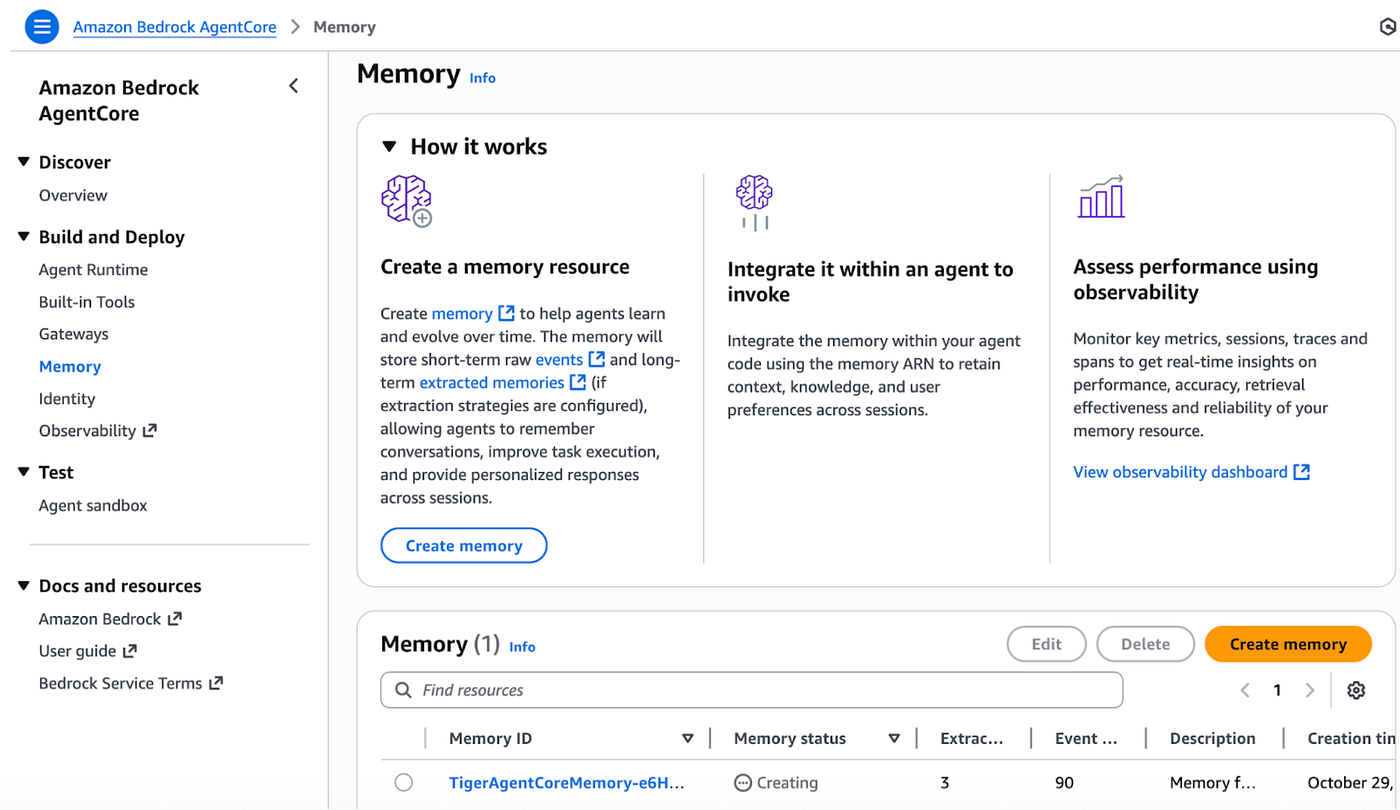

After deployment, the AgentCore Memory appears in the AWS Console:

The AgentCore Memory successfully created with three configured strategies (Semantic, Summary, and User Preferences). The memory provides persistent context storage for personalized agent interactions across sessions.

Next?

Part 3 completes the journey by deploying the AgentCore Runtime with local and Gateway tools integration, building a Streamlit frontend, and testing the complete end-to-end solution.

If you have any questions, don’t hesitate to write in the comments!

Resources

I repurposed the code from the AWS samples repository linked below to build this demo, you can easily customize it to fit your use case.

Author

Noor Sabahi | Senior AI & Cloud Engineer | AWS Ambassador

#AWSBedrock #AgentCore #MCPProtocol #AWSLambda #AgentCoreGateway #AgentCoreMemory #ContextAwareAI #AgentTools #BedrockAgents #AWSAmbassador