Part 3: Runtime & Frontend

Introduction

In Part 1 and Part 2, I built the infrastructure, deployed Lambda tools via Gateway, and configured Memory for persistent context. In this final article, I complete the journey by deploying the AgentCore Runtime and building a Streamlit frontendwith secure authentication.

This final article covers:

- Implementing the agent with local tools

- Integrating Gateway tools via MCP client

- Deploying the AgentCore Runtime

- Building a Streamlit frontend with Cognito authentication

- Testing the complete end-to-end solution

AgentCore Runtime: The Agent Engine

The Runtime is where your agent logic lives. It’s a serverless container that:

- Hosts your agent code using any framework (Strands, LangChain, CrewAI, etc.)

- Executes local tools directly within the container

- Connects to Gateway tools via MCP protocol

- Integrates with Memory for context persistence

- Scales automatically with demand

Implementing the Agent

Agent with Local Tools

The agent (agent/agent.py) includes three local tools:

from strands.tools import tool

from strands import Agent

from strands.models import BedrockModel

import os

# System prompt defining agent behavior

SYSTEM_PROMPT = """You are a helpful and professional business assistant.

Your role is to:

- Provide accurate information using the tools available to you

- Support clients with company policies, procedures, and account information

- Analyze documents and extract relevant information

- Be friendly, patient, and understanding with clients

You have access to:

1. get_company_policies() - Company policies and procedures

2. get_client_information() - Client account information

3. analyze_document() - Document analysis

4. check_warranty_status() - Product warranty status (via Gateway)

5. web_search() - Web search for updated information (via Gateway)

Always use the appropriate tool to get accurate information."""

@tool

def get_company_policies(policy_type: str = "general") -> str:

"""

Get company policies and procedures information.

Args:

policy_type: Type of policy (e.g., 'general', 'hr', 'it', 'finance')

Returns:

Formatted policy information

"""

# Your logic here: Query policy database or document store

# Return formatted policy information based on policy_type

pass

@tool

def get_client_information(client_id: str) -> str:

"""

Get client account information and details.

Args:

client_id: Client identifier (email, account number, or name)

Returns:

Formatted client information

"""

# Your logic here: Query CRM system or customer database

# Return formatted client information

pass

@tool

def analyze_document(document_type: str, content: str = None) -> str:

"""

Analyze and extract information from documents.

Args:

document_type: Type of document (e.g., 'contract', 'invoice', 'report')

content: Document content to analyze (optional)

Returns:

Analysis results

"""

# Your logic here: Use document processing service or NLP models

# Extract and return key information from the document

pass

def create_agent(memory_hooks=None):

"""Create the main agent with all tools and optional memory hooks."""

model = BedrockModel(model_id=MODEL_ID)

agent = Agent(

model=model,

tools=[get_company_policies, get_client_information, analyze_document],

system_prompt=SYSTEM_PROMPT,

hooks=[memory_hooks] if memory_hooks else [],

)

return agent

Key Points:

- Tools are decorated with

@toolfor automatic schema generation - Simple implementations demonstrate the interface (production would query real databases)

- System prompt guides agent behavior and tool usage

- Memory hooks are optional and injected during agent creation

MCP Gateway Client Integration

The agent needs to discover and invoke Gateway tools via MCP (agent/mcp_gateway.py):

from strands.tools.mcp import MCPClient

from mcp.client.streamable_http import streamablehttp_client

def create_mcp_client_with_gateway(gateway_url: str, bearer_token: str) -> Optional[MCPClient]:

"""

Create an MCP client for AgentCore Gateway using OAuth bearer token.

Args:

gateway_url: Gateway endpoint URL

bearer_token: OAuth bearer token (from M2M client credentials)

Returns:

MCPClient instance or None if initialization fails

"""

try:

# Create MCP client with OAuth bearer token

mcp_client = MCPClient(

lambda: streamablehttp_client(

gateway_url,

headers={"Authorization": f"Bearer {bearer_token}"},

)

)

# Start the MCP client connection

mcp_client.start()

return mcp_client

except Exception as e:

return None

def create_mcp_gateway_client_return_tools(get_ssm_func):

"""

Initialize MCP client and return tools using M2M authentication.

Uses machine-to-machine OAuth client credentials to obtain access token.

Returns:

List of MCP tools or empty list if initialization fails

"""

try:

# Get gateway URL from SSM

gateway_url = get_ssm_func(f"{APP_PARAMETER_NAME}/gateway_url")

# Get M2M access token for service-to-service auth

m2m_access_token = get_m2m_access_token()

# Create MCP client with M2M token

mcp_client = create_mcp_client_with_gateway(gateway_url, m2m_access_token)

if not mcp_client:

return []

# Get MCP tools from gateway

mcp_tools = mcp_client.list_tools_sync()

return mcp_tools

except Exception as e:

return []

Authentication Flow:

- Runtime retrieves gateway URL from SSM

- Generates M2M access token using client credentials flow

- Creates MCP client with bearer token in headers

- Lists available tools from Gateway

- Returns tools for agent to use

AgentCore Runtime Handler

The AgentCore runtime handler (agent/runtime.py) orchestrates everything:

# Initialize the AgentCore Runtime App

app = BedrockAgentCoreApp()

def initialize_agent_for_session(actor_id: str, session_id: str):

"""Initialize agent with dynamic memory hooks for specific user session"""

global agent

# Create memory hooks with user-specific context

memory_hooks = get_memory_hooks(actor_id, session_id)

# Create agent with memory integration and Gateway tools

agent = create_agent(memory_hooks=memory_hooks)

# Set agent state for session tracking

agent.state.set("actor_id", actor_id)

agent.state.set("session_id", session_id)

return agent

@app.entrypoint

def invoke(payload):

"""

AgentCore Runtime entrypoint function with dynamic user session support

Args:

payload: Contains user input, actor_id, and session_id

"""

global agent

# Extract request parameters

user_input = payload.get("prompt", "")

actor_id = payload.get("actor_id", "default_user")

session_id = payload.get("session_id", str(uuid.uuid4()))

# Initialize agent on first request or if session changed

if agent is None:

agent = initialize_agent_for_session(actor_id, session_id)

else:

current_actor_id = agent.state.get("actor_id")

current_session_id = agent.state.get("session_id")

if current_actor_id != actor_id or current_session_id != session_id:

# Reinitialize for new user or session

agent = initialize_agent_for_session(actor_id, session_id)

# Invoke agent with user input

response = agent(user_input)

return response.message["content"][0]["text"]

if __name__ == "__main__":

app.run()

Runtime Lifecycle:

- Container starts, app initializes

- First request arrives with user query

- Agent initializes with memory hooks for specific actor_id

- Gateway tools loaded via MCP client

- Request processed with full context

- Response returned to frontend

- Subsequent requests reuse agent if same session

Deploying the Runtime

The runtime deployment module handles the setup:

from bedrock_agentcore_starter_toolkit import Runtime

def create_agent_runtime(agent_name: str,

entrypoint: str = 'agent/runtime.py',

requirements: str = 'requirements.txt'):

"""Create an AgentCore Runtime agent."""

# Get configuration from SSM

...

# Initialize runtime toolkit

agentcore_runtime = Runtime()

# Configure deployment

agentcore_runtime.configure(

entrypoint=entrypoint,

execution_role=execution_role_arn,

auto_create_ecr=True,

requirements_file=requirements,

region=REGION,

agent_name=agent_name,

authorizer_configuration={

"customJWTAuthorizer": {

"allowedClients": [web_client_id],

"discoveryUrl": discovery_url,

}

},

)

# Get memory and gateway configuration

...

# Launch agent with environment variables

launch_result = agentcore_runtime.launch()

return launch_result

Deployment Process:

- Builds Docker container with agent code and dependencies

- Pushes to ECR (Elastic Container Registry)

- Creates AgentCore Runtime with JWT authorization

- Returns runtime ARN for invocation

Deploy the Runtime

from agentcore_agent_runtime import create_agent_runtime

from config import AGENT_RUNTIME_NAME

runtime = create_agent_runtime(

agent_name=AGENT_RUNTIME_NAME,

entrypoint='agent/runtime.py',

requirements='requirements.txt'

)

print(f"Runtime ARN: {runtime.agent_arn}")

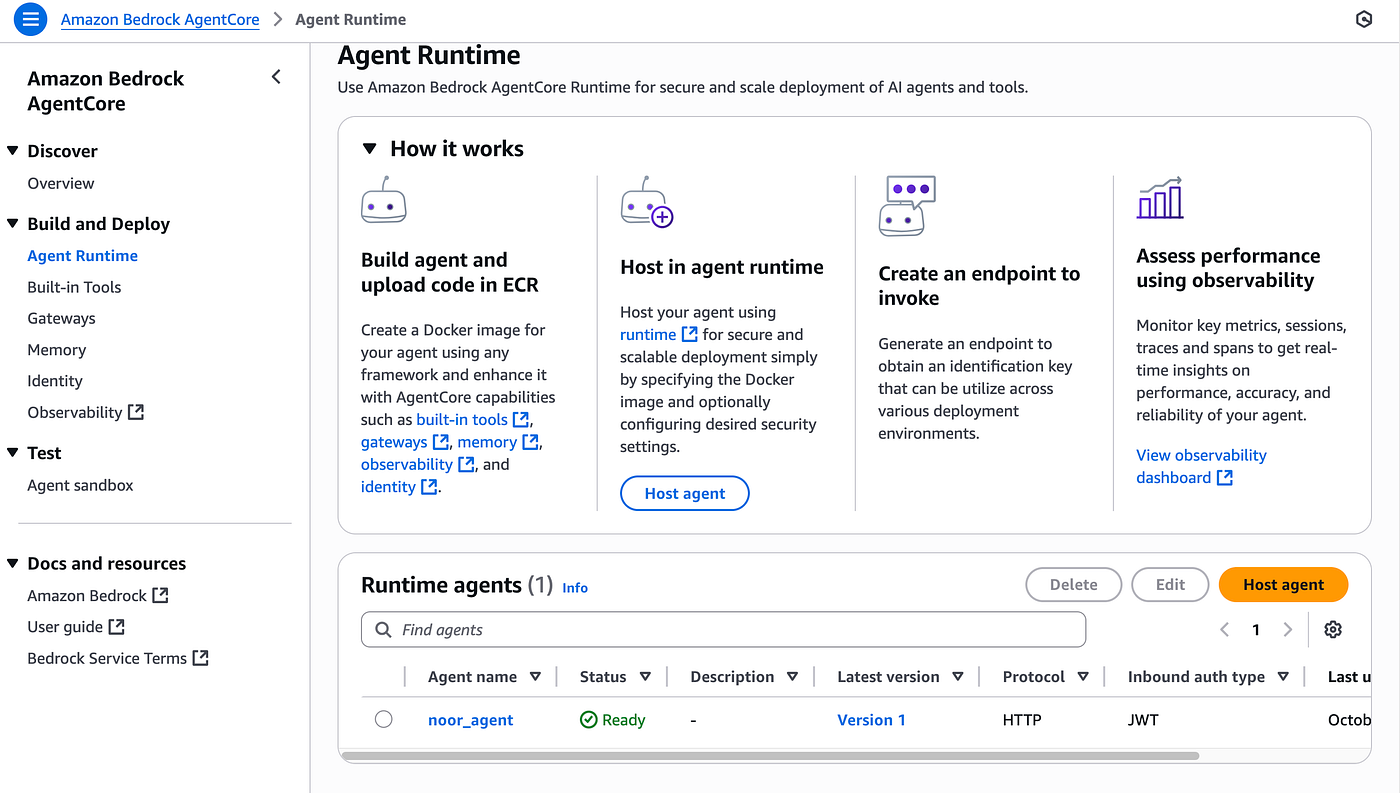

After deployment, the AgentCore Runtime appears in the AWS Console:

The AgentCore Runtime successfully deployed and ready to process requests. The runtime is configured with JWT authorization, memory integration, and gateway tools access.

Building the Streamlit Frontend

The frontend (frontend/main.py) provides a chat interface with Cognito authentication:

import streamlit as st

from streamlit_cognito_auth import CognitoAuthenticator

from chat import invoke_endpoint_streaming

# Initialize Cognito authenticator

authenticator = CognitoAuthenticator(

pool_id=secret['pool_id'],

app_client_id=secret['client_id'],

app_client_secret=None, # Public client - no secret

)

# Authenticate user

is_logged_in = authenticator.login()

if not is_logged_in:

st.error("Login failed. Please check your credentials.")

st.stop()

# Chat interface

st.title("🤖 Business Assistant")

if prompt := st.chat_input("Ask me anything..."):

# Prepare payload with actor_id and bearer token

payload = {

"prompt": prompt,

"actor_id": authenticator.get_username()

}

bearer_token = st.session_state.get("auth_access_token")

# Stream response from AgentCore Runtime

for chunk in invoke_endpoint_streaming(

agent_arn=agent_arn,

payload=payload,

bearer_token=bearer_token

):

st.write(chunk)

Frontend Features:

- Cognito Authentication: Users log in with username/password

- Session Management: Each user gets a unique session_id

- Actor ID: Username used as actor_id for memory isolation

- Streaming Responses: Real-time response display

- Bearer Token: Access token sent with each request

Running the Frontend

cd frontend

streamlit run main.py

The login interface opens at http://localhost:8501. Log in with your test user credentials.

Complete System in Action

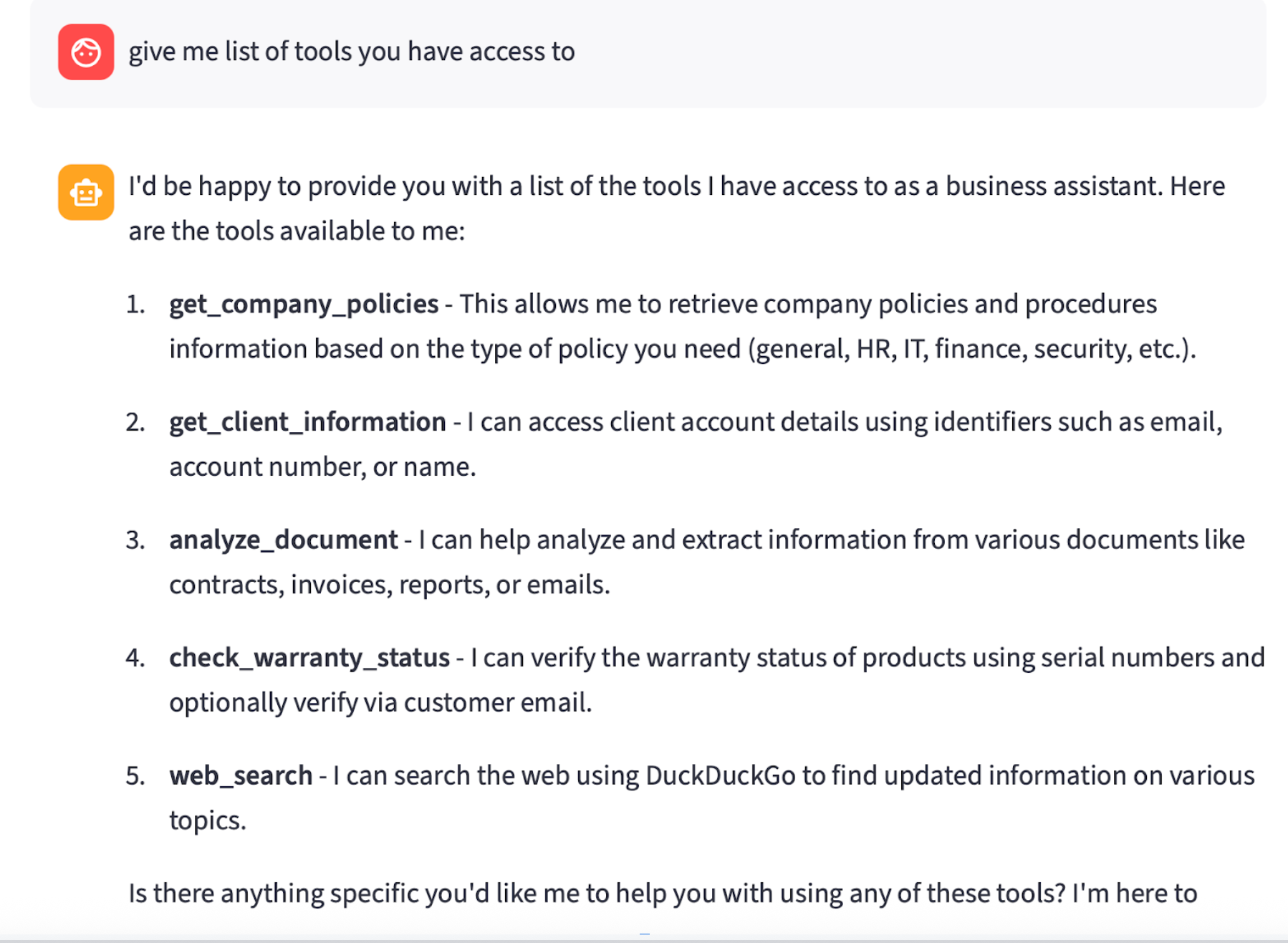

After deploying all resources (Cognito, Gateway, Memory, Runtime, and Frontend), the system is ready for user interactions. The screenshot below shows the Streamlit frontend after user authentication, demonstrating the agent’s capabilities:

The Streamlit frontend showing a user asking for a list of available tools. The agent successfully responds with all five tools (three local tools and two Gateway tools), demonstrating complete system integration with Cognito authentication, memory, and both local and Gateway tool access.

Performance and Observability

The Runtime automatically integrates with AgentCore Observability:

- OpenTelemetry traces for request flow visualization

- CloudWatch logs for debugging

- Metrics for latency and error rates

- Tool execution traces showing which tools were called and their performance

Access logs in CloudWatch Log Group: /aws/bedrock-agentcore/runtime/{runtime_name}

Conclusion

This series covered building a complete production-ready AI agent chatbot with AgentCore Platform. In this implementation I repurposes examples from AWS documentation to create a comprehensive use case and demo, with deep dives into specific aspects such as Cognito authentication and Gateway integration. I hope you find it useful for building your own production-ready AI agents with Amazon Bedrock AgentCore.

Key Takeaways

I found AgentCore to be a very organized and structured way to build agentic applications. In production, it handles infrastructure provisioning seamlessly and provides decoupled, separate concerns through the AgentCore Gateway implementation with smooth memory management via AgentCore Memory. It’s a highly secure and scalable solution with rich logging capabilities through Observability — making it an excellent choice for enterprise-grade AI agent deployments.

What’s Next?

In the next implementation, I’m planning to explore multi-agent orchestration using Amazon Bedrock AgentCore. This architecture enables a single orchestrator agent to coordinate multiple specialized agents, each with its own tools and expertise. The orchestrator agent delegates complex tasks to appropriate sub-agents, aggregates their responses, and delivers a comprehensive solution — unlocking powerful workflows for enterprise AI systems.

If you have any questions, don’t hesitate to write in the comments!

Resources

Author

Noor Sabahi | Senior AI & Cloud Engineer | AWS Ambassador

#AWSBedrock #AgentCore #MCPProtocol #AWSLambda #AgentCoreGateway #AgentCoreRuntime #Cognito #AIChatbot #AgentCoreMemory #ContextAwareAI #AgentTools #BedrockAgents #AWSAmbassador